While Google isn't ready to commit to a wide release of the AR walking navigation mode for Google Maps, the company has begun testing the feature with members of its Local Guides crowdsourcing community.

As a result, I've had the opportunity to test an alpha build of the feature in the real world (and log some needed steps in the process). And I can't stress enough that this is a very early version. As such, this is more of a collection of impressions of the experience rather than a review.

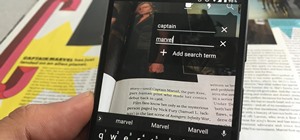

The option for AR navigation comes via a button alongside the customary "start navigation" button, as well as on the route overview screen with a cube icon that has become a customary symbol for AR across multiple platforms. As AR navigation begins, the traditional map interface pushes to the bottom half of the app, with the camera view taking over the top half.

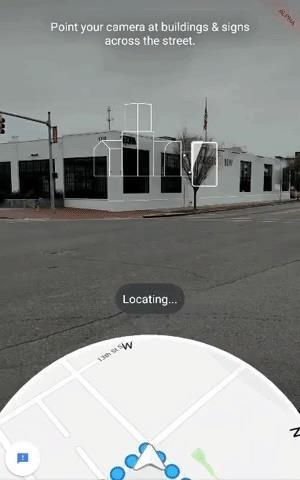

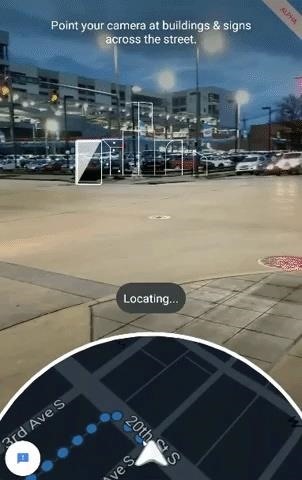

Similar to how ARKit and ARCore detect surfaces, users are instructed to scan their surroundings for buildings and street signs in order to orient the app to the users' surroundings. AR navigation in Google Maps signals the completion of this process with a pop of Google-colored dots indicating identified points in the app's view of the environment.

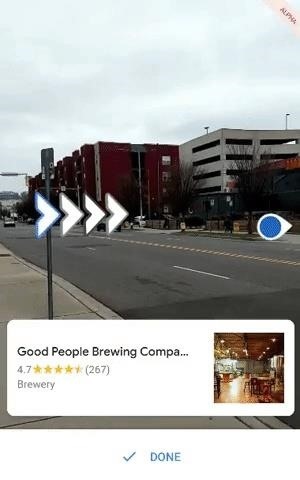

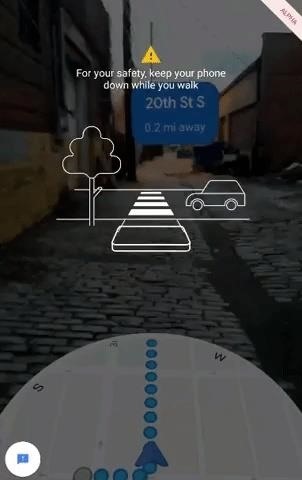

The AR navigation itself is fairly simple. With orientation achieved, prompts in the camera view point users to turn their devices in the right direction. When oriented, users see a blue sign denoting the distance to their next turn. Each turn is designated with large directional arrows that flash in that familiar combination of blue, red, yellow, and green. Finally, as users arrive at their destinations, a map card pops up at the bottom of the screen.

When I've used AR nav apps in the past, AR navigation has always felt like a feature that is far better-suited for smartglasses. Generally, because of the "always-on" nature of the function, it's simply not a good user experience to ask users to constantly hold a smartphone (much less a tablet) in front of their faces as they walk.

Google has recognized this failing and actually addressed it as part of the new experience. Once users begin walking their path, they are advised to not hold their devices in front of them as they walk. If users ignore this initial instruction, the screen darkens. When a user lifts their device, the app repeats the orientation process.

Another caveat with regard to usability is the fact that the experience works best during the day, which is to be expected considering the fact that the app relies on identifying landmarks in the camera view. However, in nighttime environments with enough lighting, the system can find its mark. In addition, in my limited testing, I found the feature to be a bit of a battery hog, but that's not a surprise given the amount of display-on time, plus camera operation, plus machine learning algorithm functions in operation while using the app.

Google isn't the first company to launch a mobile AR navigation experience, with the Hotstepper and Blippar's AR City among its predecessors. However, Google's flavor of AR navigation stands as a significant milestone for the evolution of AR as a consumer platform.

Google Maps remains the top mapping app in the US by a wide margin, according to Statista, with Google-owned Waze sitting at the number two spot. That kind of scale is what the AR industry needs to get AR into the hands of consumers and get them accustomed to AR as a practical tool in their everyday lives before the smartglasses market matures.

It's also a high-water mark for the AR cloud concept. Sure, Google is presenting this as a single feature within Google Maps, but the company has essentially promoted Street View as a digital copy of the world as the marker for its computer vision capabilities, and is now injecting persistent AR content into it. Along with ARCore, its Cloud Anchors multiplayer protocol for iOS and Android, and its Google Maps API for location-based AR apps, Google could have the makings of a device-agnostic AR cloud platform on its hands.

Just updated your iPhone? You'll find new features for Podcasts, News, Books, and TV, as well as important security improvements and fresh wallpapers. Find out what's new and changed on your iPhone with the iOS 17.5 update.

Be the First to Comment

Share Your Thoughts