As Facebook, Apple, Samsung, and others offer augmented reality selfie effects and content that challenge its platform, Snapchat has continued to innovate with its augmented reality capabilities.

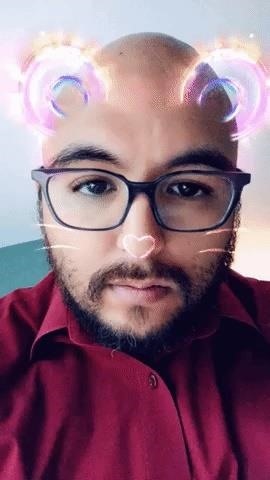

The latest example, released to the app's Carousel on Monday, is a Lens that reacts to sound. The Lens attaches a pair of translucent neon bear ears to the user's head. The ears pulse to whatever music is in the user's vicinity.

According to a company spokesperson, the Lens is just the first of many to use the new technology, as other Lenses will follow in the next few weeks.

Based on my limited testing, the sound reaction, at least in this implementation of the Lens, is more sensitive to drum beats than other sounds. For example, the Lens reacts robustly (above left) to the kick drum in Childish Gambino's "This is America," but the effect is less pronounced in the rapid-fire drumming found in modern metal.

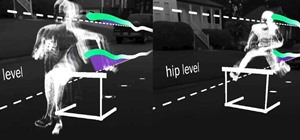

Sound recognition is just the latest advancement in Snapchat's augmented reality competencies. Earlier this year, the company has also introduced body tracking. Another magic trick, sky segmentation, was showcased with a flying whale that could disappear behind trees and buildings. More recently, the company has begun to offer hand recognition, with Coors Light among the first brands to take advantage of the capability.

Snapchat faces feverish competition from Facebook (and its photo-sharing app Instagram) for daily active users and advertisers. Facebook announced its own AR advancements, such as hand and body tracking, background segmentation, and image recognition, at its F8 developer's conference earlier this month.

At the same time, the AR toolkits from Apple and Google are letting app developers build their own AR experiences that compete for screen time with Snapchat's brand of augmented reality and advertisers who opt to build standalone apps rather than purchase Sponsored Lenses. Both companies are also innovating at a rapid pace, with both toolkits gaining new features in their latest versions.

Snapchat may get credit as an originator in the field, but it won't have the luxury of banking on that status; it will need to continue to lead with technology.

Just updated your iPhone? You'll find new features for Podcasts, News, Books, and TV, as well as important security improvements and fresh wallpapers. Find out what's new and changed on your iPhone with the iOS 17.5 update.

Be the First to Comment

Share Your Thoughts